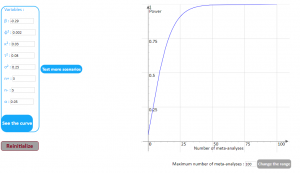

Predicting data saturation using mathematical models from ecological research

Sample size in surveys with open-ended questions relies on the principle of data saturation.

Determining the point of data saturation is complex because researchers only have information on what they have found.

This app uses mathematical modeling to describe and extrapolate the accumulation of themes during the course of a study and help researchers determine point of data saturation.

Peer-review

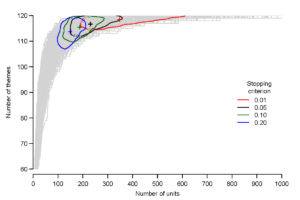

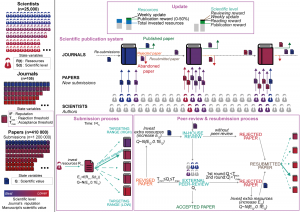

Agent-based model

In this project, we developed an agent-based model (ABM) by adopting a unified view of peer review and publication systems and calibrating it with empirical journal data in the biomedical and life sciences. This ABM may help in better understanding the determinants of the scientific publication system and in assessing the performance of the most promising alternative systems of peer review.Click edit button to change this text.

Alternative systems

In this project, we used a previously developed agent-based model of the scientific publication and peer-review (PR) systems calibrated with empirical data to compare the efficiency of five alternative peer-review systems with the conventional system. We modelled two systems of immediate publication, with and without online reviews from the community (crowdsourcing PR), a system with only one round of reviews and revisions allowed (re-review opt-out) and two review-sharing systems in which rejected manuscripts are resubmitted along with their past reviews to any other journal (portable PR) or to only those of the same publisher but of lower impact factor (cascade PR). The review-sharing systems outperformed or matched the performance of the conventional one in all peer-review efficiency metrics, and showed a large decrease in total time of the peer-review process and total time devoted by reviewers to complete all reports in a year.

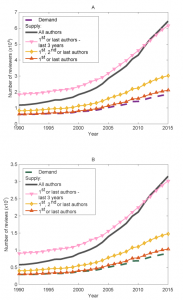

Burden

In this project, we used mathematical modeling to estimate the overall quantitative annual demand for peer review and the supply in biomedical research. We found that for 2015, across a range of scenarios, the supply exceeded by 15% to 249% the demand for reviewers and reviews, however, 20% of the researchers performed 69% to 94% of the reviews. Among researchers actually contributing to peer review, 70% dedicated 1% or less of their research work-time to peer review while 5% dedicated 13% or more of it. An estimated 63.4 million hours were devoted to peer review in 2015, among which 18.9 million hours were provided by the top 5% contributing reviewers.

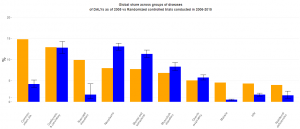

Does clinical research effort match public health needs?

A large-scale mapping of 115,000 randomized trials and 2.2 billion disability-adjusted life years.

Power and sample size calculation for meta-epidemiological studies

Meta-epidemiological studies are used to compare treatment effect estimates between randomized clinical trials with and without a characteristic of interest. In this method, one identifies a number of meta-analyses that included at least one trial with and without the characteristic, concerning a variety of medical conditions and interventions. For each meta-analysis, treatment effect estimates are compared between trials with and without the characteristic (eg by estimating a ratio of odds ratios or a difference in standardized mean differences). The mean impact of the characteristic is then estimated across all meta-analyses.